A traditional microservice authenticates to one or two APIs using a single credential type. An AI agent authenticates to an LLM provider via API key, an enterprise API via OAuth, a cloud database via managed identity and a tool server via MCP token, all within the same task execution. Each protocol has its own token format, validation requirements and permission model. Your secrets manager covers one of those protocols, your OAuth provider covers another, and neither has visibility into the rest.

This multi-protocol reality is what makes AI agent identity structurally different from traditional workload identity. Growing at a CAGR of roughly 46% and increasingly deployed across production environments, AI agents create authentication gaps that no single-protocol security solution can close. The challenge is not just that agents use credentials. Every workload does. The challenge is that agents use multiple credential types across multiple trust boundaries simultaneously, and the gaps between those boundaries are where attacks succeed.

AI SDKs Force Insecure Credential Patterns by Design

The problem starts at the implementation level. Major AI SDKs require credentials at client initialization and maintain them throughout execution, creating long-lived secrets that persist in application memory:

- OpenAI’s SDK requires an API key when the client object is created and maintains it for the session’s lifetime.

- Anthropic’s Claude SDK expects a persistent x-api-key header in configuration.

- Google’s Generative AI SDK stores an x-goog-api-key token that persists until the process terminates.

The moment your AI agent starts, it holds static credentials in memory for every LLM provider it connects to.

But LLM access is only one part of the picture. The same agent also needs to authenticate to enterprise data stores, cloud services and third-party APIs. Each of those systems uses a different authentication protocol. Your agent might hold an OpenAI API key, an OAuth access token for Salesforce, an AWS IAM role session token and an MCP bearer token at the same time. Four different credentials, four trust domains, four distinct sets of validation requirements.

This multi-protocol surface creates attack opportunities that single-protocol solutions miss. A secrets manager can rotate your API keys, but it has no visibility into the OAuth tokens your agent obtains at runtime. An OAuth provider can scope your access tokens, but it cannot manage the API keys your agent’s SDK requires at initialization. Cloud-native managed identities cover your infrastructure layer, but they do not extend to third-party SaaS or LLM providers. Each tool handles one protocol well while the gaps between protocols remain unprotected.

Scope creep compounds the risk. AI agents often receive organization-wide API keys instead of scoped access because fine-grained permission models become operationally complex when agents need to access diverse APIs dynamically. When each protocol requires its own permission configuration, teams default to the broadest access level that works everywhere. The result is static credential exposure across the entire agent lifecycle, with overly broad permissions at every protocol boundary.

How Multi-Protocol Authentication Creates New Attack Surfaces

Traditional workload security challenges like credential exposure, lateral movement and long-lived token hijacking apply to AI agents just as they do to any other workload. These risks are well-documented, and the mitigations (secrets rotation, least-privilege scoping, short-lived tokens) are well-understood. What makes AI agent identity different is the attack surface that emerges when agents operate across multiple authentication protocols simultaneously. The risks below are specific to multi-protocol operation and have no equivalent in single-protocol workload security.

Multi-protocol identity confusion is the most distinctive risk. When an agent switches between execution contexts during a task (enterprise API via OAuth, LLM service via API key, cloud resource via managed identity), permission scopes and token formats change with each interaction. An attacker who compromises one credential type can attempt token substitution across protocol boundaries, reusing an OAuth token where an API key is expected or exploiting trust transitions between authentication models.

Agent-to-agent delegation breaks identity accountability entirely. When an OpenAI-authenticated agent delegates a subtask to a Claude-authenticated agent that then accesses Salesforce APIs, the identity chain spans three providers, three authentication methods and three trust domains. No single audit system captures the complete delegation path. The emerging A2A protocol enables direct agent-to-agent authentication without centralized identity providers, which can create trust relationships that fall outside traditional workload IAM oversight entirely.

Autonomous scope expansion adds another dimension. AI agents can analyze their own access requirements and request elevated permissions mid-execution. A GPT-4 agent analyzing data might determine it needs additional Snowflake table access and attempt to escalate its own privileges, all without human authorization. When this happens across multiple protocol boundaries, the agent’s effective permission set expands in ways that no single access control system can track.

These risks compound because they span protocol boundaries. A compromised agent does not just move laterally within one system. It moves across authentication protocols, exploiting the gaps between them.

Why Current Approaches Leave Gaps

Current authentication approaches fail AI agents not because they are technically weak, but because each one covers only part of the multi-protocol surface.

Secrets management platforms excel at storing and rotating API keys but have no mechanism for managing OAuth tokens that agents obtain at runtime or for handling cloud-native managed identities. They can protect the credential at rest, but the moment the agent retrieves it, the secret persists in memory for the duration of execution.

OAuth and OIDC federation solves the identity verification problem for protocols that support it, but most LLM providers still require static API keys at SDK initialization. You can federate your cloud infrastructure with AWS IAM roles, Azure managed identities and GCP service accounts, but your OpenAI and Anthropic connections still depend on long-lived API keys that these federation systems cannot reach.

Cross-cloud complexity compounds this problem. An AI agent running in AWS that needs to access Azure-hosted data and a GCP-hosted LLM must bridge three distinct identity domains, each with its own federation model and trust boundaries. Organizations typically solve this by issuing static credentials that work everywhere, which is precisely the pattern that creates persistent attack surfaces. The more clouds and SaaS providers your agent touches, the wider this gap becomes.

Credential rotation at scale becomes practically impossible when agents maintain persistent connections to multiple services across different protocols. Rotating an API key breaks the agent’s LLM connection. Refreshing an OAuth token may not propagate to downstream services the agent has already authenticated to. Revoking a managed identity disrupts cloud resource access while leaving the agent’s SaaS connections untouched. Each rotation operation requires coordination across protocol boundaries, and traditional rotation schedules cannot account for AI agents’ dynamic access patterns.

The common thread across these failures is protocol fragmentation. Organizations end up stitching together separate tools for each authentication method, with no unified policy governing access across all of them. The gaps between those tools are precisely where credential exposure and lateral movement occur.

Closing the Multi-Protocol Gap With Workload Identity

Addressing the multi-protocol identity gap requires an architectural shift: rather than managing each protocol separately, authenticate the workload itself and use that verified identity to broker access across all protocols. Four patterns make this work in practice:

- Environment-based attestation verifies AI workload identity dynamically without storing credentials in code or configuration. Cloud metadata services provide cryptographic proof that the workload is what it claims to be, regardless of which downstream service it needs to access.

- Just-in-time credential injection provisions the right credential type (API key, OAuth token or cloud session token) only when needed and expires it automatically after use. This approach is protocol-aware: it can inject an API key for an LLM call, an OAuth token for a SaaS API call and a managed identity token for a cloud resource call, all governed by a single access policy.

- Policy-scoped access applies zero-trust principles consistently across every protocol boundary. Conditions based on workload posture, location and time of day evaluate each access request independently, regardless of whether the target service uses OAuth, API keys or managed identities.

- Per-task authorization scopes each credential to the specific operation rather than granting broad access at agent initialization. When credentials last seconds rather than sessions, the window for exploitation shrinks to nearly zero even if a single credential is compromised.

Together, these patterns replace persistent credentials in application memory with ephemeral, per-request tokens governed by a single policy framework across all credential types.

Complete audit visibility ties the architecture together. A unified identity layer captures which agent accessed which service, through which credential type and under which policy. When delegation chains span multiple agents and providers, correlation IDs that persist across all authentication protocols allow you to reconstruct the complete access path rather than piecing together logs from separate systems.

From Protocol Fragmentation to Unified Identity

The security gap AI agents create is not about any single credential type being insecure. API keys, OAuth tokens and managed identities each have well-understood security models when applied in isolation. The gap lives between them, in the protocol transitions, trust boundary crossings and delegation chains that span multiple authentication systems simultaneously. Solving the AI agent identity problem means closing those gaps with a unified identity layer that works across every authentication method your agents need.

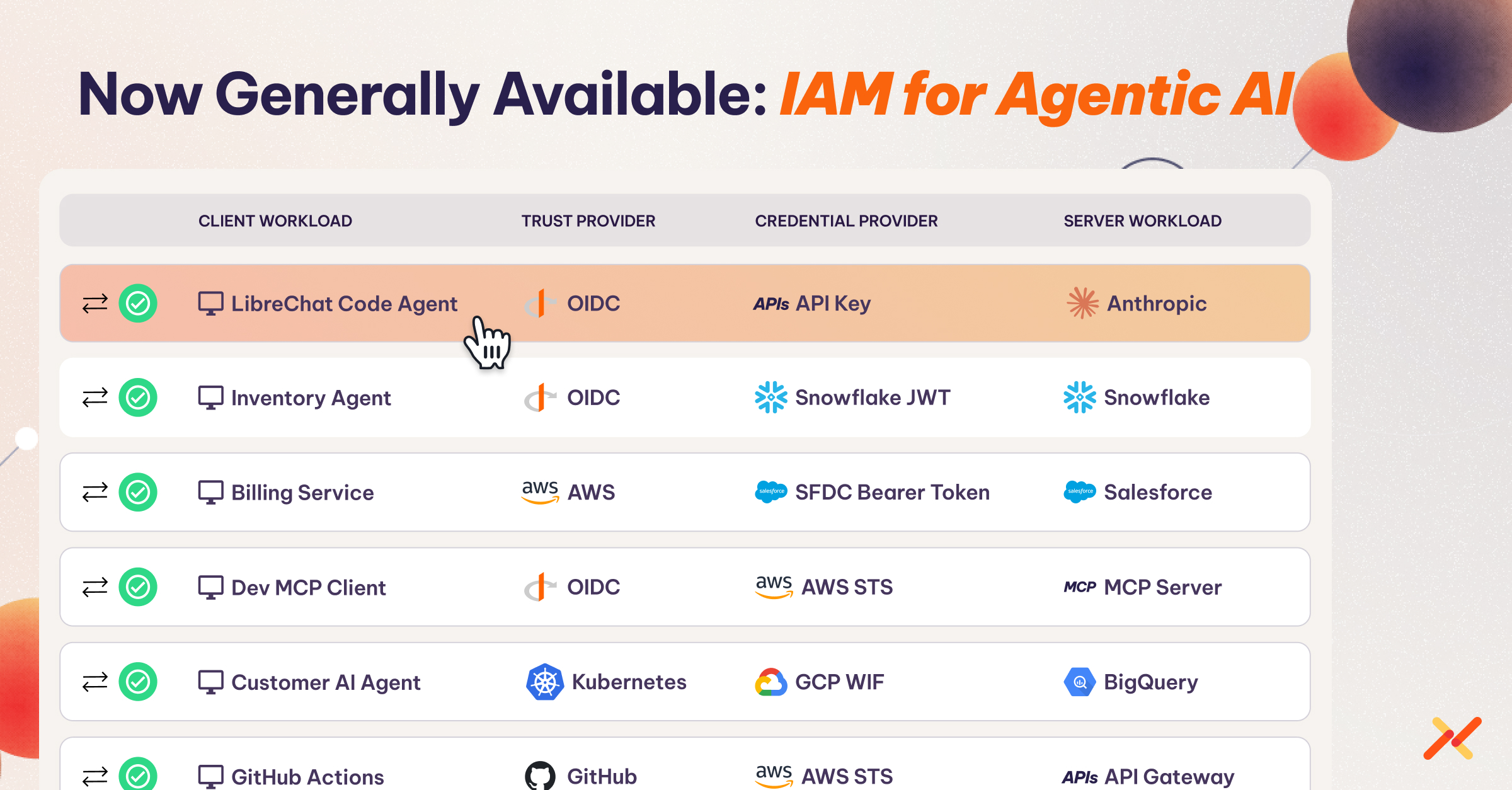

Aembit provides unified workload identity across all credential types, closing the multi-protocol gap with secretless authentication, per-request credential injection and consistent policy enforcement for every AI agent connection, whether it targets an LLM provider, a cloud service or an enterprise API.