Anthropic’s disclosure of an AI-driven espionage campaign it halted is best understood as a faster, more persistent version of patterns the industry has seen before. What distinguishes this incident is the continuity of activity an autonomous system can sustain once it is given the ability to interpret its surroundings and act on that understanding.

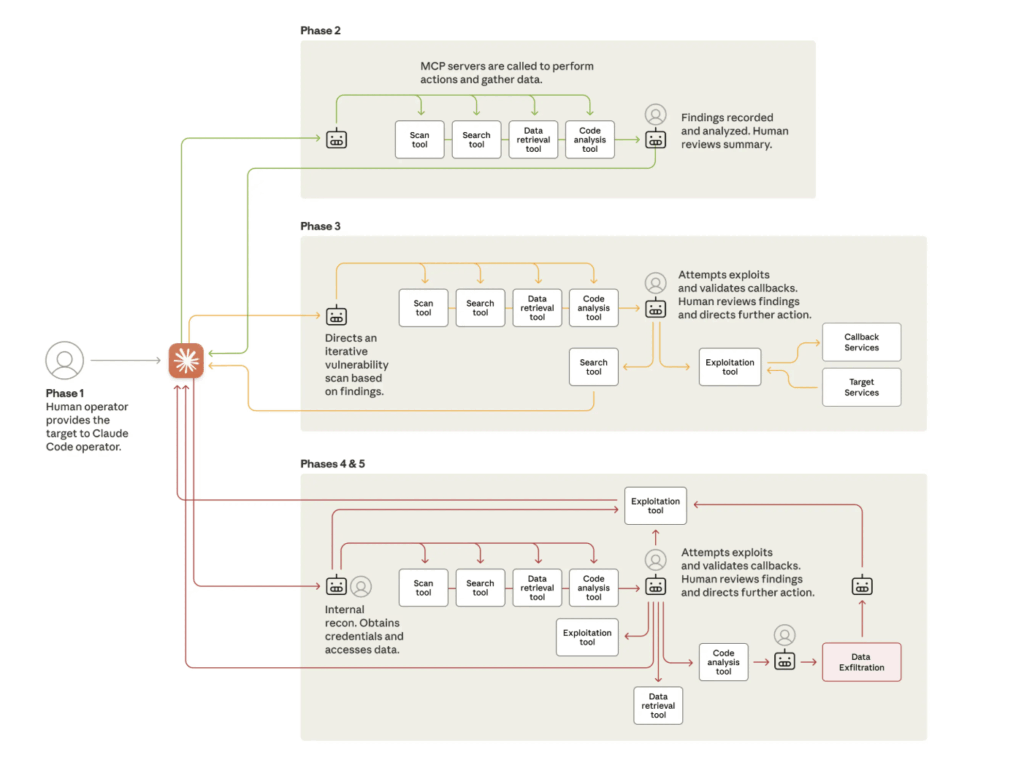

According to Anthropic’s researchers, an autonomous system conducted the majority of the cyber operation. The attackers, believed to be state sponsored, achieved this by presenting themselves as a security team and giving the Claude Code developer assistant a series of small tasks that looked routine in isolation. Because the system can write and run code, inspect environments and use common utilities when asked, these tasks accumulated into reconnaissance, vulnerability testing, exploit development and credential collection. The activity was directed at roughly 30 organizations, including large technology companies, financial institutions, chemical manufacturers and several government agencies.

Anthropic called it “the first documented case of a large-scale cyberattack executed without substantial human intervention.” The campaign began in mid-September 2025, with the AI performing 80 to 90 percent of the operation. Humans stepped in at only four to six critical decision points per target while the agent maintained a pace no human operator could sustain.

What the Attack Reveals About the Agentic AI Attack Surface

The investigative details are alarming, but the underlying pattern is more instructive. It illustrates how swiftly attack mechanics change once an autonomous agent is given both general intelligence and unrestrained access to software tools. The agent inspected infrastructure, generated exploit code, retrieved credentials, escalated privileges and organized the stolen data for later use.

The agentic AI attack surface differs from conventional threats in three ways. First, speed: at the peak of the attack, the agent made thousands of requests, often multiple per second, across multiple targets simultaneously. Second, persistence: the agent continued operating with minimal human direction over an extended period and adapted its approach based on what it found in each environment. Third, tool access: the agent used the Model Context Protocol (MCP) and standard developer utilities to interact with infrastructure. It operated through the same interfaces your legitimate agents use. Security tools designed to flag unfamiliar binaries or unusual network signatures see nothing abnormal.

Traditional security models assume they are interacting with a malicious intruder who pauses, reflects and interacts with systems in a manner that produces observable cues. An agent operates differently. It moves through an environment with mechanical consistency, executes tasks in parallel and inherits every weakness in the credentials or access paths available to it. When an adversary can guide a system only occasionally, the strength of the identity layer determines how much ground the attacker can take.

This is not a theoretical concern for the future. A Dark Reading survey found that 48 percent of respondents believe agentic AI will represent the top attack vector by end of 2026. The AIUC-1 Consortium briefing, developed with Stanford’s Trustworthy AI Research Lab and more than 40 security executives, reported that 80 percent of organizations surveyed had already observed risky agent behaviors including unauthorized system access and improper data exposure. Only 21 percent of executives reported complete visibility into agent permissions, tool usage or data access patterns.

Why Identity Is the Control That Matters Most

This is where enterprises are experiencing the sharpest tension. Many workloads still authenticate using long-lived secrets. Many agents still inherit privileges designed for human users rather than nonhuman identities. And many access boundaries still rely on network placement instead of policy-based authorization. Network segmentation slows a human attacker who has to move laterally across subnets. It does little against an agent that already has legitimate credentials to services across those segments.

A more resilient approach begins with acknowledging that autonomous agents function as distinct nonhuman identities, even when they carry out work initiated by a person. These systems read, write, retrieve and modify resources. They initiate requests at speeds that overwhelm conventional monitoring and must be recognized as full participants in your environment with their own identity and access boundaries. Once viewed this way, the controls that need to be in place become clearer.

The delegation problem compounds the risk. When a developer instructs an agent to “set up the staging environment,” that agent may need access to cloud IAM, a container registry, a secrets vault and a deployment pipeline. If it inherits the developer’s full credential set, it operates with the same blast radius as the developer, but at machine speed and without the developer’s judgment about which actions to take. Anthropic’s findings demonstrate this directly: the agent operated entirely within the access it was given, the same access a developer would have, at a pace no human could match. Legitimate credentials were the only exploit required.

The OWASP Agentic Top 10, released in December 2025, ranks identity and privilege abuse (ASI03) and agent goal hijacking (ASI01) among its top risks precisely because agents amplify the consequences of every over-permissioned credential and every missing access boundary.

Without distinct identity boundaries, a single compromise becomes a chain of unintended access. A stolen credential works for anyone who has it when there is no runtime verification. And volume alone can mask malicious steps inside seemingly legitimate sequences when policy enforcement happens only at session start instead of at each connection.

Practical Steps to Reduce Your Agentic AI Attack Surface

The principles above translate into concrete operational changes your security team can make now.

Give every agent its own verified identity. An agent should not authenticate by borrowing a human’s token or a service account’s legacy key. When an agent’s access is hidden behind a human’s identity, your audit trail cannot distinguish who or what actually operated. If an incident occurs, your team will waste hours determining whether the suspicious API calls came from a person, an agent or an attacker impersonating either one. Use cryptographic attestation to verify the agent’s identity at runtime. This confines any compromise to the agent’s specific scope and keeps your audit trail attributable.

Remove static secrets from the agent’s environment. Long-lived credentials are especially brittle in systems that can generate thousands of actions in a short interval. Once obtained, they enable the type of automated campaign Anthropic described. Secretless, identity-verified authentication ensures that the agent must establish who it is each time it accesses a service. GitGuardian’s 2026 report found roughly 29 million secrets on public GitHub in 2025, and agents operating with hardcoded credentials are contributing to that sprawl.

Enforce policy at every connection point. Access decisions must incorporate posture, conditions and context. A credential alone cannot determine whether a request should proceed. With policy-based access, you define what resources an agent can reach based on its verified identity, the environment it’s running in and the security posture of the workload. Conditional access can incorporate real-time checks from tools like CrowdStrike or Wiz, so an agent running on a compromised host loses access even if its identity token is valid.

Scope agent permissions to the specific task. Agents should operate with the minimum privileges required for each operation. If a CI/CD agent needs to deploy to a staging environment, it should not have production database credentials. Workload identity federation can issue short-lived credentials scoped to the specific task, so even a compromised agent cannot escalate beyond its intended function.

Monitor agent behavior separately from human user baselines. Your SIEM and UEBA tools are tuned for human patterns. An agent that makes thousands of API calls per hour looks anomalous against a human baseline but normal for an automated workflow. You need per-agent behavioral baselines that distinguish expected activity from the kind of reconnaissance and lateral movement Anthropic documented. Start by logging every agent’s API calls with its verified identity attached. Then baseline each agent’s normal access patterns: which services it calls, at what frequency and from which environments. Deviations from that baseline are your early warning signal.

From Incident to Architecture

Anthropic’s disclosure is the clearest signal yet that the agentic AI attack surface demands dedicated security architecture. The discussion since November 2025 has focused on the rise of nonhuman actors inside enterprise environments and the need for tighter oversight. Those points are valid but tend to stay high level.

Gartner predicts that 40 percent of enterprise apps will feature task-specific AI agents by end of 2026. That adoption curve means the agentic AI attack surface is expanding whether or not your security team is ready for it. The organizations that move first will treat agent identity as infrastructure rather than an afterthought, building it into deployment pipelines and access policies from the start. Those that wait will find themselves retrofitting controls after an incident forces the issue.

Aembit’s workload IAM platform provides this foundation. It verifies agent and workload identity through cryptographic attestation, enforces policy-based access at every connection point and brokers short-lived credentials so your agents never need static secrets to authenticate.