LLMs are quickly becoming teams’ preferred UI for many tasks, and MCP servers are the secret sauce that connects AIs to real applications. They give agents the ability to access tools, query data, and take action. For example, an LLM with access to a code repository can assist a developer in finding bugs and creating tickets for the right person to fix them.

That shift introduces a new problem most teams aren’t fully thinking through yet: who or what is making those requests, and what should it be allowed to do.

In this walkthrough, we’ll build a simple MCP server and show how it connects to an LLM, along with a few things to keep in mind before using it beyond a test environment.

What Are MCPs?

Model Context Protocol (MCP) is an open-source standard for integrating applications and services with LLMs, defining a structured way for them to communicate. MCP servers are implementations of this standard, and they are quickly being adopted across developer tools and AI platforms. You can check the official documentation for the full definition.

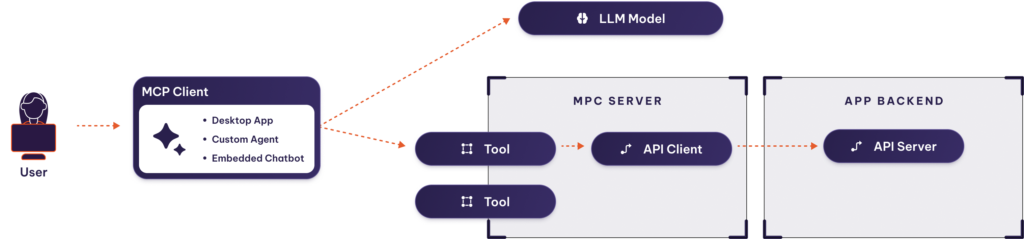

As you can see, MCP is a client-server architecture where:

- The MCP server exposes tools.

- Tools are services exposed by the MCP server to be used by the LLM. If your app already has an API, you can code tools as wrappers for your API endpoints.

- Then, MCP clients consume these services, interpret the information, and provide it to LLM Models to obtain a response. Most LLM Desktop apps are MCP clients.

Beyond tools, MCP servers can also expose resources such as database connections and pre-written prompts.

Security Challenges of MCPs

The MCP specification is similar to web APIs, in which:

- Authentication and authorization are delegated to the HTTP protocol.

- Inputs may contain harmful data.

- The server may contain bugs and vulnerabilities that pose security risks.

The most common error on MCP servers is loose access controls that lead to unauthorized access to sensitive resources and data leaks. Same as with API servers, each user’s access must be limited to the data they are entitled to.

We’ll see how to secure a server later on.

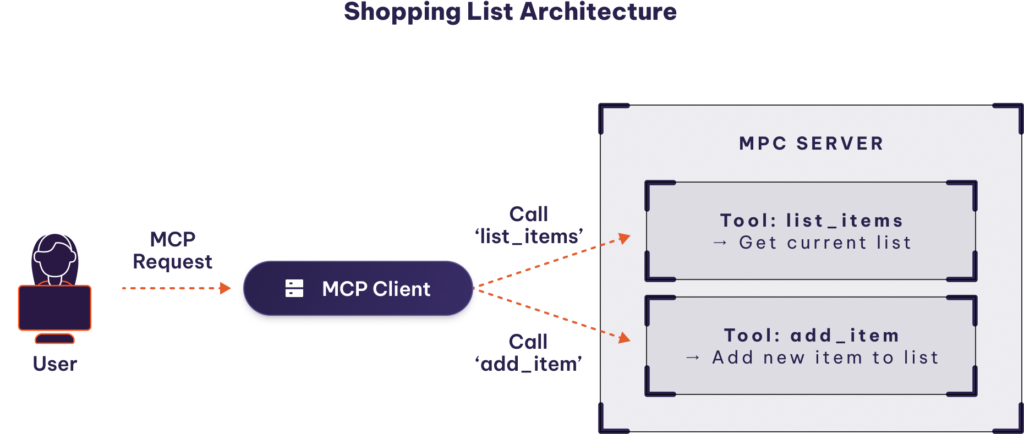

Example: MCP for a Shopping List app

Let’s learn by example. We’ll use an imaginary “shopping list app” as a backend, since it’s a simple example you can extrapolate to your services. First, we’ll build an MCP server for the Shopping list app, then we’ll interact with it from an LLM.

Our MCP server will expose two tools:

- add_item: adds a new item to the shopping list.

- list_items: lists all items present in the shopping list.

This tutorial will use Python 3 with FastMCP (https://gofastmcp.com/), a straightforward framework for building MCP servers.

Creating the MCP Server Code

Defining a tool with FastMCP is as simple as adding the @mcp.tool decorator to a method declaration. Our MCP server would be as simple as this server.py file:

Python:

from fastmcp import FastMCP

mcp = FastMCP("shopping-list")

shopping_list = []

@mcp.tool()

def add_item(item: str) -> str:

"""Adds a new item to the shopping list"""

shopping_list.append(item)

return f"{item} added to shopping list"

@mcp.tool()

def list_items() -> list[str]:

"""Lists all items present in the shopping list"""

return shopping_list

if __name__ == "__main__":

mcp.run()Let’s dive in and explain some bits in detail.

Python:

mcp = FastMCP("shopping-list")

shopping_list = []The mcp variable contains all the FastMCP magic that turns our methods into MCP tools.

The shopping_list array represents our data backend. It’s just an array to keep the example short, but in reality, you would use an API client to connect to your application.

Pay attention to the comment on the first line of each method, it will serve as the description of the tool (for fastMCP, it’s important to use comments of type “””).

FastMCP will automatically turn a code like:

Python:

@mcp.tool()

def add_item(item: str) -> str:

"""Adds a new item to the shopping list"""

Into a tool description like:

Json:

{

"name": "add_item",

"description": "Adds a new item to the shopping list",

…

}Finally, we start the MCP server in the main method:

Python:

if __name__ == "__main__":

mcp.run()By default, the fastMCP server listens for HTTP on port 8000. But you can customize it.

MCP Inspector

Let’s test that our MCP server works before connecting it to an LLM.

For that, we’ll use MCP Inspector, a tool designed to inspect and debug MCP servers by intuitively displaying the configuration you added.

To enter MCP Inspector, you can run:

Python:

npx @modelcontextprotocol/inspector dev server.pyAs a result of either of these commands, a browser tab will open with MCP Inspector’s UI, pointing at localhost:6274.

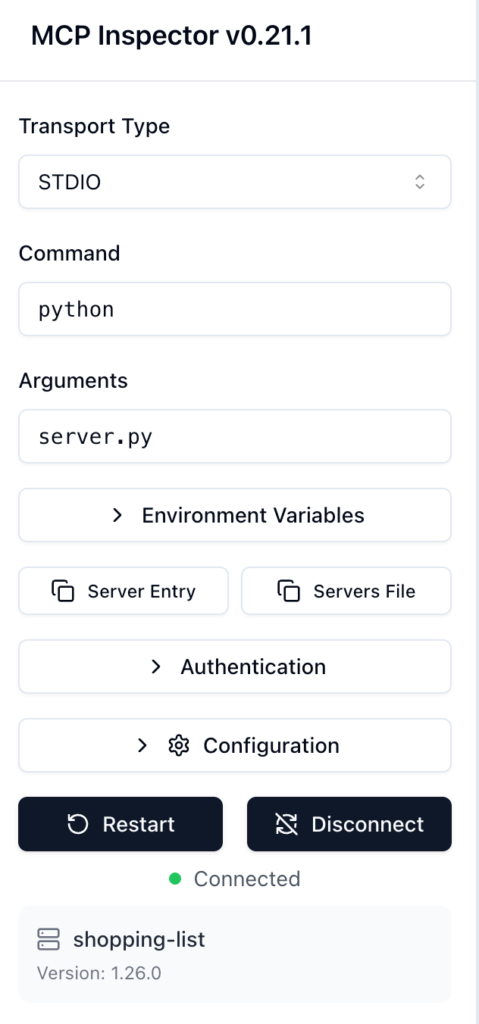

Here, you can configure the type of MCP server, STDIO, or HTTP.

For the Shopping List example and local developments, you can select STDIO and the command used to launch the server (in our case, python server.py), then press the connect button.

Notice how relevant information about your MCP server now appears on the screen.

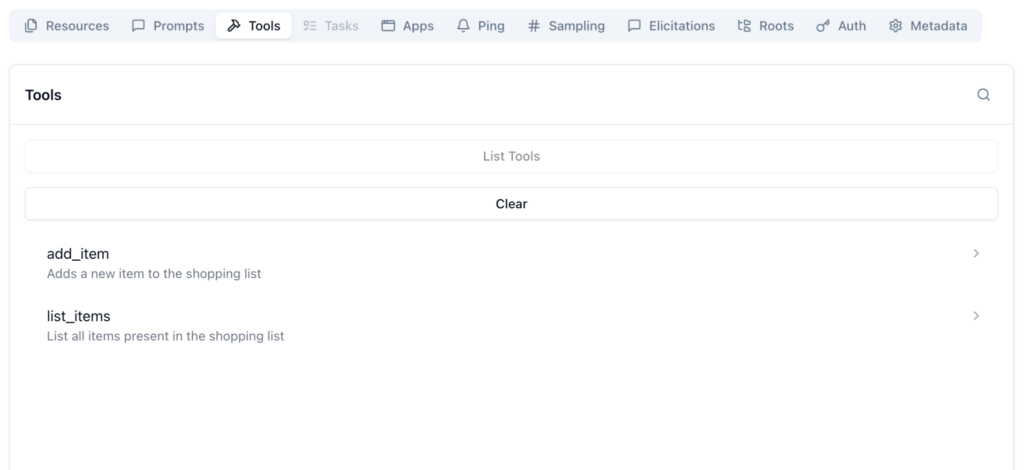

Go to the Tools section and press the List Tools button.

Nice! The two tools that you created before appear here (add_item and list_items). Note how the first-line comment you included serves as the specification for the tool.

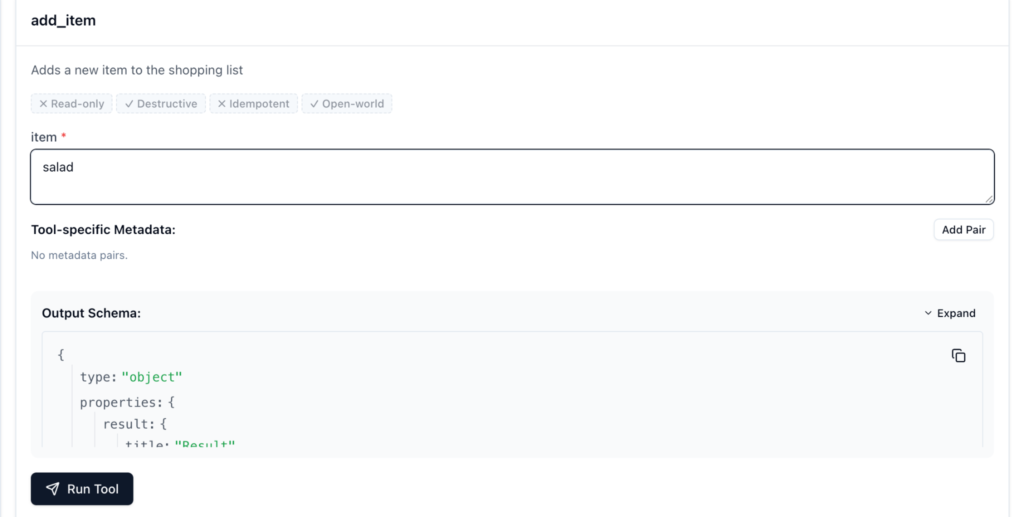

Try now selecting the add_item tool.

You can now see that there’s an option to Run Tool. Use it to add an item to the shopping list directly from the inspector.

In the same way, you can run the list_items tool afterward, and the added shopping list item will appear in the output.

Connect the MCP With Your LLM

Let’s add the final touch to our integration by connecting it to an AI agent!

For this example, we will use Claude, as it has wide support for MCP servers on its free tier.

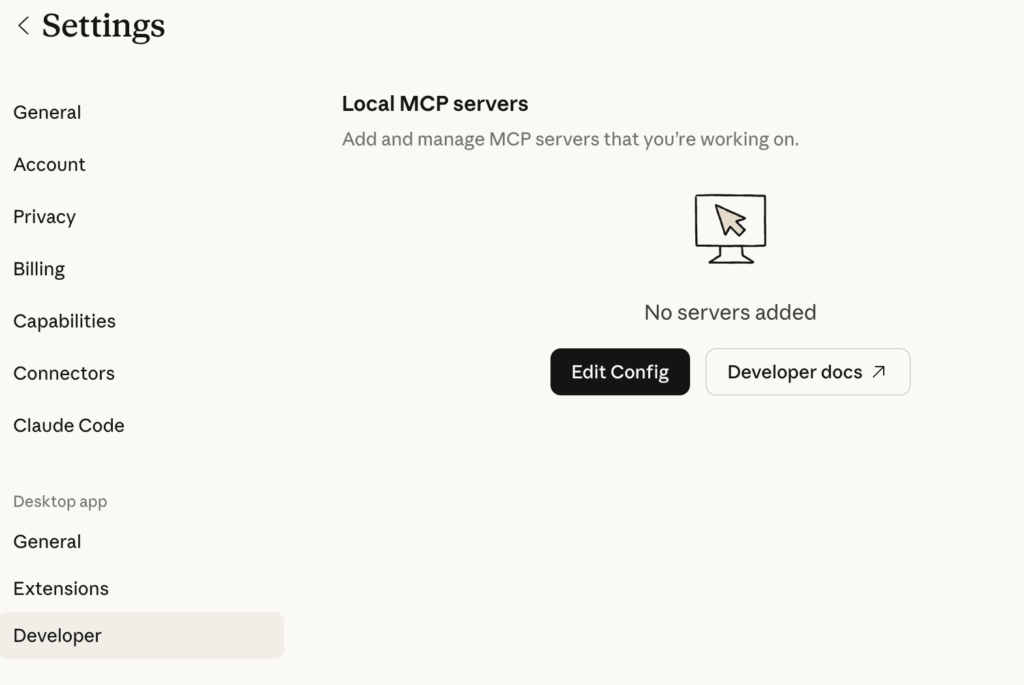

Edit claude_desktop_config.json by clicking on Settings -> Developer -> Edit Config:

Add the following entry:

Json:

"mcpServers": {

"shopping-list": {

"command": "/path-to-your-python-folder/bin/python",

"args": [

"/path-to-your-mcp-server-folder/server.py"

]

}

}Remember, you can find your Python’s full path using: which python. However, if you’re using venv, execute which python inside the project folder where the environment is active.

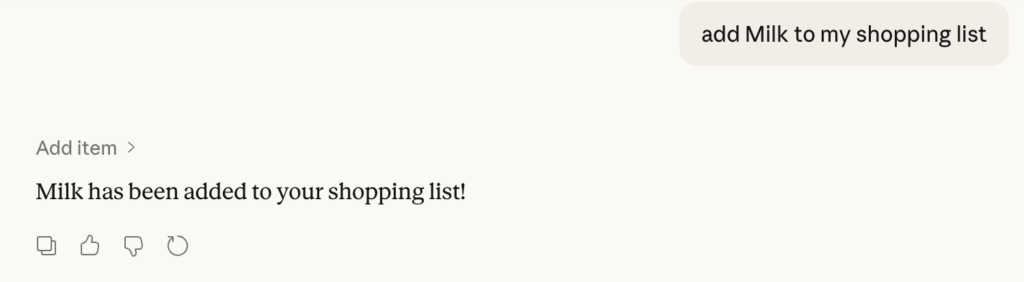

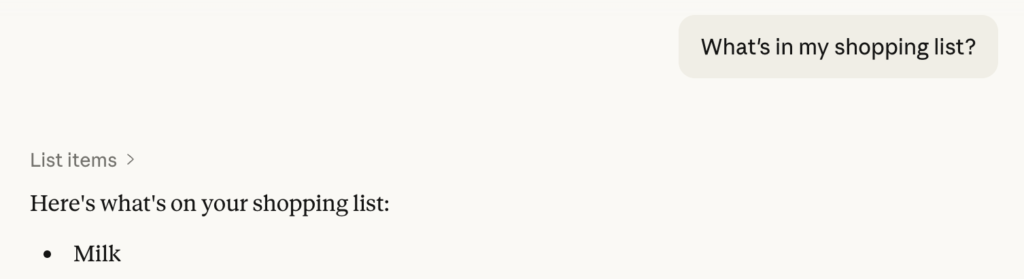

Now, restart Claude and try interacting with your shopping list:

Without extra configuration, Claude is able to detect the right tool.

That’s the end of the tutorial on creating a basic MCP for your LLM. Now, let’s review some security best practices.

How To Secure MCP Servers

As we hinted earlier, MCP servers are a lot like API servers, so similar principles apply. Here’s a brief reminder of security best practices for public APIs:

- Secure authentication with proven methods.

- Provide authorization, limiting the data and actions available to each user.

- Sanitize inputs to avoid exploits of the underlying code.

- Keep an inventory of software binaries and libraries, and their vulnerabilities.

- Then update them and mitigate any severe vulnerability that applies to you.

- Create a safety net that detects suspicious behavior, blocks threats, and quarantines compromised assets.

- Finally, audit the activity on the services to enable forensic investigations.

Authentication and authorization are the areas where MCP servers differ most from regular API servers. Let’s see a few tips.

MCP Server Authentication Best Practices

The MCP specification does not mandate authentication mechanisms, but defines optional ones at the HTTP level.

Keep in mind that most MCP servers run locally as Docker containers and are little more than wrappers for an API client. Without extra authentication, the MCP server relies on the API server to authenticate users.

In these cases, the MCP server will indicate an environment variable to provide the API key. Then, will read this key and use it wherever necessary:

Python:

import os

API_KEY = os.getenv("WEATHER_API_KEY")This strategy is far from ideal, as the API_KEY will be stored in a plain-text configuration file. What happens if the API_KEY is compromised?

Also, since running an MCP server locally is inconvenient, clients are starting to support remote MCP servers. That’s why the MCP specification defines HTTP authentication via OIDC and OAuth 2.0.

The library we’ve used in our example, FastMCP, implements several authentication providers. For example, implementing OAuth authentication is as simple as setting the auth parameter of your mcp object with the right endpoints and configuration:

Python:

from fastmcp import FastMCP

from fastmcp.server.auth.providers.workos import AuthKitProvider

auth = AuthKitProvider(

authkit_domain="https://your-project.authkit.app",

base_url="https://your-fastmcp-server.com"

)

mcp = FastMCP(name="Enterprise Server", auth=auth)Building upon this, you can strengthen authentication further by implementing JIT (Just-in-time access), which comes into action by providing access:

- Only when needed

- Only for the right amount of time

This way, you can mitigate the impact of a static element that could be compromised at a later stage.

[TODO: JIT diagram – Reuse one of Aembit’s]

In terms of code, implementation won’t differ much. You only need to replace the endpoints of your service with the ones from your JIT provider.

MCP Server Authorization Best Practices

The biggest security problems an MCP server can cause are:

- Leaking information

- Allowing a user to escalate privileges

That’s why it’s so important to implement authorization at the MCP server level rather than relying on prompts or client security.

For that:

- Uniquely identify each client, its roles, and its permissions.

- Implement a permission check on each tool.

A Note on MCP Clients

Another difference between API clients and MCP clients is how they process your data:

- An API client always has ownership of the data they consume. It’s not common for the client to leak information unless it is purposely designed to do so.

- An MCP client, such as an LLM desktop application, often uses conversations to train the models. As such, data provided to one client could potentially be displayed to a different user.

Do keep tight control over which clients connect to your MCP servers, and educate your users on the security risks of AI.

Other Challenges of MCP Servers

Keep scalability and stability in mind when designing your MCP servers.

Scalability: Handling an additional layer of communication adds a small latency spike and, more importantly, additional complexity.

When dealing with systems composed of hundreds of tools and resources, handling MCPs can introduce operational overhead and increase the percentage of faults across the elements.

Do perform load testing on both the MCP server side and with different prompts on the client side.

Adoption in the ecosystem: Though we mentioned that MCPs are gaining notoriety at the moment, the current AI landscape is a fast-paced environment where things may change from one day to the next quite abruptly. MCPs, while a useful and recognized standard, might change in the next few years, due to shifts in their purpose and the big companies behind them.

Do use popular, well-maintained frameworks to build your MCP servers. Catching up with the changes should be as easy as upgrading to a new version.

Conclusion

MCP servers are a much-needed standard in the interconnected and interoperable world of agentic and AI-driven tools: every day, the percentage of network communication being done by non-human entities is growing. By providing context, purpose, and information schemas, we can make this data flow easier.

When working with MCP servers, you must be aware of security risks, as exposing information without proper safeguards may lead to unauthorized access or data leaks. It’s important to apply principles such as Zero Trust or Least Privilege to secure related connections. Consider using Just-in-time access to reduce vulnerabilities and limit the impact of potential threats.